Have you heard of DALL·E 2 or Craiyon? Well if you haven’t, you will soon.

DALL·E 2, an artificial intelligence (AI) model that generates wildly specific images from a simple text prompt, is making waves across the internet. Imagine any scene, even one as zany as “woman ascending an infinity staircase made out of cookies” and within seconds DALL·E 2 renders a library of images.

This is a game-changer for everyone.

Specifically for visual storytellers, it opens up a new universe of how to tell a story. The advanced program DALL·E 2 is still in the beta phase, and if you want to play around with it, you have to sign up for the waitlist here or you can play around with the lighter version called Craiyon, formerly known as DALL·E mini. UPDATE: The waitlist has been eliminated and now everyone can sign up and start creating AI images. Users can purchase additional credits for $15 on top of the free credits.

Especially for organizations with limited budgets, Craiyon and DALL·E 2 will be able to instantly generate unique imagery to spruce up reports, blog posts, brochures, and websites. Communications teams will no longer need to rely solely on photographers or graphic designers to create customized visuals. Sounds great, right? Well, before organizations leap to integrate this technology, let’s consider the potential issues of ethics when it comes to social impact storytelling.

Authenticity

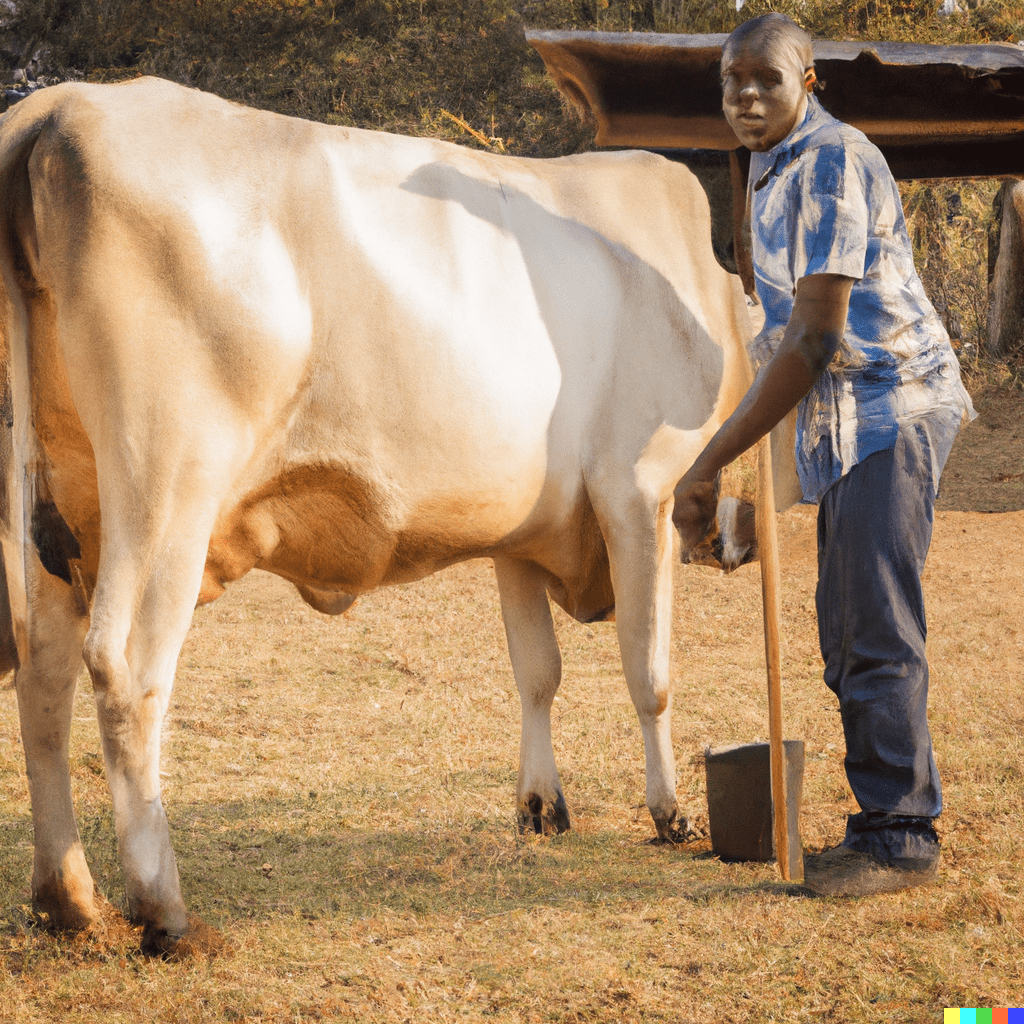

One of the major concerns of AI-generated photography is the authenticity of an image. For example, if asked to generate an image of a Zimbabwean farmer milking a cow or a woman in a headscarf working at a computer, the technology would produce a realistic visual that an organization could publish.

While the depiction may appear accurate, the fact remains that the image is not real life. The primary aim of visual storytelling is to humanize an issue by providing glimpses into the real lives of others. When these images are computer-generated, the overall authenticity of the storytelling is undermined. It opens the door for larger questions of credibility – such as, how closely does the organization have ties to its community if it cannot produce real imagery of them?

Bias

Users of DALL·E 2 also need to be aware of biases inherent in the technology. As reported by Rest of World, all AI-enabled technology is prone to bias which can perpetuate stereotypes, especially of people of color.

It appears OpenAI is taking steps to curb bias in DALL·E 2 as described in this blog post. Still, if used by NGOs, communications professionals need to be vigilant and vet the rendered imagery to ensure it is in line with their work.

Ownership

Another looming concern is copyright. For now, OpenAI has granted users full rights to commercialize the images created with DALL·E 2, but we anticipate this policy to evolve along with the technology itself and its corresponding use cases. As pointed out by Ziv Epstein, a researcher at the MIT Media Lab’s Human Dynamics Group, image ownership and credit becomes complicated when it is produced by a mish-mash of code, natural language, and a pre-existing image library. As these issues get ironed out, organizations need to take caution when using AI-generated imagery to ensure proper crediting.

Let’s Discuss!

DALL·E 2 has rightfully created an online frenzy. It is undeniably impressive technology and has opened new dimensions for visual artistry. However, DALL·E 2 is nascent tech, and the conversation around its drawbacks and benefits is just getting started. We’re curious to hear from our community – what’s your perspective on the future of DALL·E 2 and its use in social impact storytelling?

The advent of this new AI world should encourage organizations to sharpen their visual storytelling skills so they can better decide when to leverage tech versus when to opt for a human approach. To learn more about how your organization can increase its know-how in this space, get in touch! We’re happy to help you think through the best use of tools to tell your stories of impact.